If Large Language Models (LLMs) are the "brains" of AI, then Vector Databases are the "long-term memory". Without them, AI is limited to what it learned during training. With them, AI can remember your personal documents, your business data, and every interaction you've ever had.

Why Not Just Use SQL?

Traditional databases are great for exact matches. If you search for "Blue Shirt", SQL finds every row where the description contains those exact words.

But what if you search for "something to wear on a sunny day at the beach"? SQL fails. A Vector Database succeeds because it understands the meaning (semantic value) of the words.

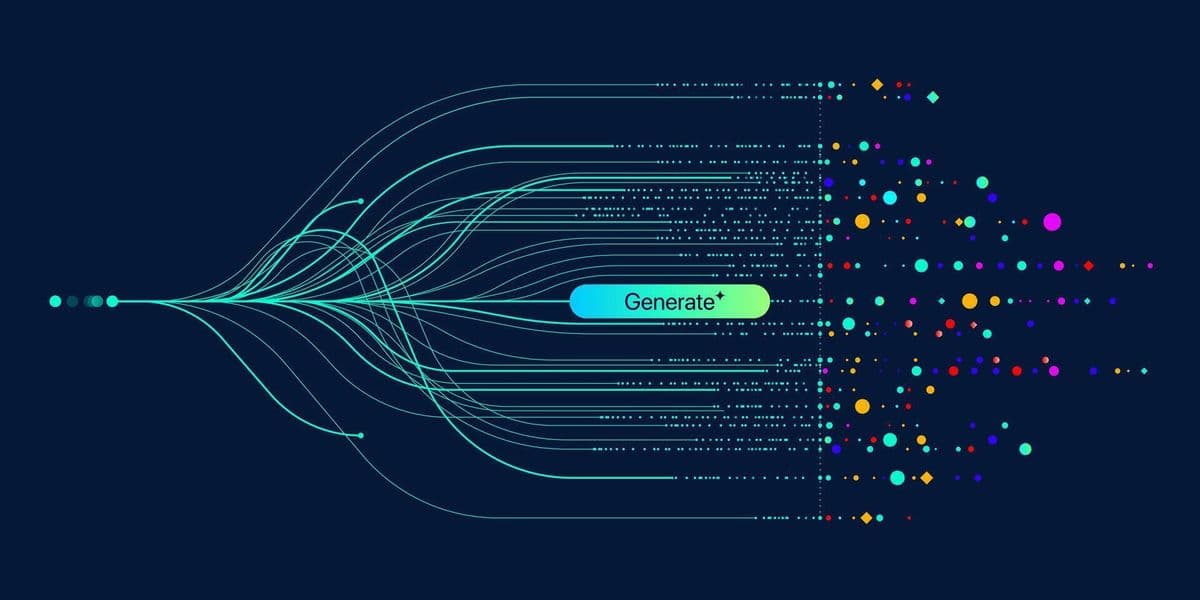

How It Works: The Multi-Dimensional Map

Vector databases store data as Embeddings—long lists of numbers that represent a point in a high-dimensional space.

- "Ocean" and "Sea" will be points very close to each other.

- "Ocean" and "Mountain" will be further apart.

When you ask a question, the database finds the pieces of information that are "mathematically closest" to your query.

The Rise of RAG

The most common use case for Vector Databases is Retrieval-Augmented Generation (RAG). Instead of training a new model on your data (which is expensive and slow), you:

- Store your data in a Vector Database.

- Search for relevant chunks when a user asks a question.

- Feed those chunks to the LLM as context.

Conclusion

In 2025, if you are building an AI application, you are building on a Vector Database. Whether you use a dedicated solution like Pinecone or an extension like pgvector, understanding vector search is no longer optional for software engineers.